Need to install Hadoop for a class or a project? It can be hard. It took me 1 hour to install and that was after clear instructions provided by Professor.

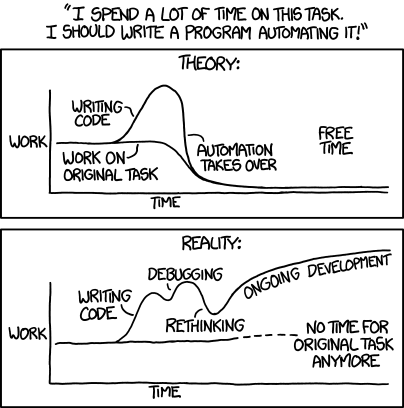

So I did what a good Software Engineer does and automated it.

In this post, I will cover two ways to install Hadoop:

- Automatic install in 5 minutes

- Manual install in 15ish minutes

Unless you are a Software Engineer who wants to install it manually, I’d recommend going with Vagrant as it’s faster and you don’t have to fiddle with you OS.

Vagrant also has an added benefit of keeping your local OS clean and not having to install and troubleshoot different versions of jdk and other packages.

Installing Hadoop in 5 Minutes with Vagrant

- Make sure you have latest versions of VirtualBox and Vagrant installed.

- Download or clone Vagrant Hadoop repository.

- Navigate to the directory where you downloaded the repo using command line (want to learn command line? I’ve got you covered: 7 Essential Linux Commands You Need To Know)

- Run

vagrant up - Done

That’s it. Once installation is done, run vagrant ssh to access your vagrant machine and use Hadoop.

Installing Hadoop Manually on macOS and Linux

Warning: I’ve only tested these instructions on Linux (Ubuntu to be specific). On macOS, you may need to use different folders or install additional software.

Here are the instructions:

- Make sure that apt-get knows about latest repos using

sudo apt-get update - Install Java

sudo apt-get install openjdk-11-jdk - Download Hadoop

wget http://mirrors.koehn.com/apache/hadoop/common/hadoop-3.2.0/hadoop-3.2.0.tar.gz - Copy Hadoop files to

/usr/local/bin(this is a personal preference, you can copy to any folder, just make sure you change the commands going forward)sudo tar -xvzf hadoop-3.2.0.tar.gz -C /usr/local/bin/ - Rename the hadoop folder. Again, this is a personal preference, you can leave it the way you want but you’ll need to change the paths going forward.

sudo mv /usr/local/bin/hadoop-3.2.0 /usr/local/bin/hadoop/ - Update path variables:

- Update Hadoop environment variables:

- Generate a SSH key and add it to

authorized_keys(thanks to Stack Overflow user sapy: Hadoop “Permission denied” warning) - You’ll need to edit the

/usr/local/bin/hadoop/etc/hadoop/core-site.xmlfile to addfs.defaultFSsetting. Here’s my configuration file: - Next, edit the

/usr/local/bin/hadoop/etc/hadoop/hdfs-site.xmlfile to add 3 properties. As SachinJ noted on Stack Overlow, hdfs will reset every time you reboot your OS without the first two of these. (Hadoop namenode needs to be formatted after every computer start) - Initialize the hdfs

/usr/local/bin/hadoop/bin/hdfs namenode -format - Run dfs using

/usr/local/bin/hadoop/sbin/start-dfs.sh - Test everything is running fine by creating a directory in hdfs using

hdfs dfs -mkdir /test

That’s it. If you got Hadoop working, do share the post to help others.

Faced any issues? Tell me in comments and I’ll see how I can help.